In Terminator 2: Judgment Day (1991), Arnold Schwarzenegger plays a T-800 cyborg originally created to help crush the humans who survived the nuclear apocalypse but reprogrammed by the human resistance to go back in time and protect Sarah Connor and her young son John (who will one day lead that resistance). In a key scene, he fills in the Connors on what will happen in the future.

The system goes online August 4th, 1997.

Human decisions are removed from strategic defense. Skynet begins to learn at a geometric rate.

It becomes self-aware at 2:14 a.m. Eastern time, August 29th.

In a panic, they try to pull the plug.

Skynet, he goes on to explain, fought back by launching its missiles at Russia. In a small sign of how much the world has changed since 1991, young John asks, “Why attack Russia? Aren’t they our friends now?”

Schwarzenegger-bot explains that Skynet knew that “the Russian counterattack will eliminate its enemies over here.”

1997 came and went without this happening, but in 2026, plenty of people are worried that it still might. The explosive development of AI since 2022 has led to widespread believe that human-like “artificial general intelligence” (AGI) is on the horizon, and super-intelligence isn’t far behind. While “AI risk” is a broad category covering both the most outlandish science fiction scenarios and far more grounded concerns, I’m interested here in the extreme end of the spectrum.

How worried should we be about anything like what we can call “the August 29th scenario” ever happening?

For a long time my position was basically “not at all.” The whole thing struck me as nonsense cobbled together by stringing extremely speculative premises and assuming that real life would play out like a Hollywood movie. It always reminded me of the Simpsons episode parodying Jurassic Park, where Professor Frink says, “Elementary choas theory tell us that all robots will eventually turn against their masters and run amuck.”

So, in winter 2022, for example, I wrote an article for a print issue of Jacobin criticizing the Effective Altruism crowd. One of the things I dinged them for was that many of them were turning to “long-termism.” They now thought more charitable funds should be spent on figuring out how to save future generations from hypothetical “existential risks” (e.g. malevolent AI) because the potential utility of heading off such risks was greater than classic Effective Altruism projects like distributing malaria nets.

The main argument of the piece was that socialism, rather than better-targeted charity, was the path to eliminating poverty, at home and ultimately around the world. In the middle of summarizing that argument at the end of the article, I took the opportunity to roll my eyes at the “AI kills us all” scenario.

The gains won by ordinary people banding together to fight for an improved social contract in the short term and more basic structural changes to the economy in the long term, I wrote,

are vastly more durable and predictable than the whims of either an individual check-writing plutocrat or an EA organization that could decide tomorrow its funds are better spent on thwarting Skynet rather than malaria. And such gains offer a level of dignity, security, and autonomy to their beneficiaries that can’t be replicated by even the most effective form of altruistic charity.

In 2026, I’d stand by almost everything in that article except the phrase I’ve bolded there. And I’m still deeply skeptical that money spent on think-tankers sitting around thinking about such scenarios is actually going to do anything whatsoever to make an August 29th event less likely to happen. But I’m far less inclined to roll my eyes at the scenario itself.

One of the oddest things about this discourse is how much overlap there seems to be between “AI will kill us all” doomsayers and Silicon Valley libertarians who certainly don’t want AI development to be nationalized. But if you take the doomsaying seriously, doesn’t keeping the industry in private hands mean that we have the equivalent of a whole bunch of competing private-sector Manhattan Projects?

One way to understand why I never used to take it seriously is that the most common presentations of the scenario tend to combine three assumptions:

Some AI system will become a human-equivalent or eventually super-human mind (the Mind Premise)

That mind will have goals that are so severely misaligned with human interests that it might want to do something August 29th-ish (the Misalignment Premise)

The system will be given control over levers it could use to carry it out something August 29th-ish (the Autonomy Premise)

I don’t think I ever had any trouble accepting Misalignment. If we do think a non-human super-intelligence will one day come into existence, what possible guarantee would there be that its plans would include the continued flourishing of the human race. As the late science fiction writer and Computer Science professor Vernor Vinge put it in a classic essay on the subject, “physical extinction” may not even be “the scariest possibility” when you start thinking through analogies to “the different ways” that human beings have related to the lower animals.

Again, all this rests on accepting Mind at least for the sake of argument, but given that premise, sure. It’s easy to see Misalignment as at least a real possibility.

I was, however, always deeply skeptical about both Mind and Autonomy.

Let’s start with Autonomy.

A key line in Schwarzenegger-bot’s explanation of the lead-up to August 29th, 1997 is:

Human decisions are removed from strategic defense.

And an obvious skeptical question is, “Why on earth would human decision-makers do that?”

It’s like watching some sci-fi show where humanoid robots designed to do menial labor tasks turn against their masters and they start melting everyone with red laser beams from their eyes. Any reasonable viewer should ask themselves, “Why did the human engineers who designed them put in that feature?”

Amazingly, though, the existential risk fearmongers have been decisively proven correct about this part. The day before the beginning of the war in Iran, the Trump administration ordered all federal agencies to begin phasing out their use of the AI firm Anthropic. In typical Trumpian fashion, the president explained his decision by saying he would “NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS.”

And what was the specific way that the “RADICAL LEFT, WOKE COMPANY” in question was trying to tell “OUR GREAT MILITARY” its business?

It drew the line at developing “fully autonomous” AI weapons systems.

Before you give Anthropic executives too much credit here, keep in mind that the issue isn’t AI targeting. It’s “fully autonomous” targeting.

We’re in the early days of the planned six-month phaseout (if it’s ultimately allowed to go through), and Stavroula Pabst writes at Responsible Statecraft that Anthropic’s Claude system has been extensively used in Iran.

Despite a DoD ban on Anthropic over its demands that its tech not be used for fully autonomous military targeting, its AI model, Claude, is enjoying prime time use in the U.S. war on Iran.

Indeed, the U.S. military leveraged its AI targeting tools — which still employ Claude — to strike over 1,000 targets in Iran during the first 24 hours of the now rapidly expanding war.

The U.S. is using Claude through Anthropic’s partnership with controversial software company Palantir. As sources told Bloomberg, Claude is central to Palantir’s Maven Smart System, which provides real-time targeting for military operations against Iran. Because of its centrality to the war targeting, Claude won’t be phased out until the DoD has found a replacement…

To put this in perspective, current AIs often tell people that they might as well walk to the car wash to get their cars washed instead of driving there if it’s not very far away. They’ve had a shocking amount of trouble in the recent past figuring out that there are three Rs rather than just two in the word “strawberry.” No one has a right to be shocked if AI targeting generates results like 175 innocents (most of them young students between the ages of 7 and 12) losing their lives when the Shajareh Tayyebeh girls’ school was targeted on the first day of the war in Iran.1

Pabst writes:

The use of AI in military targeting has been controversial dating back at least to the Gaza war. Indeed, IDF forces largely ignored its AI targeting software’s 10% false positive rate when using its “Lavender” system to target and attack alleged militants in Gaza — killing an untold number of civilians in the process.

Now there is concern about its use in Iran. Leading up to the initial attack on Iran, the Washington Post reported that Maven, powered by Claude, proposed “hundreds” of targets for the U.S. military to strike, prioritized them in order of importance, and provided location coordinates for them — helping the U.S. carry out attacks quickly, and blunting Iran’s ability to respond in kind.

But what about oversight? It is not comforting that DoD Secretary Pete Hegseth exclaimed there were “no stupid rules of engagement” in the war at a press conference early this week.

The Pentagon’s Law of War Manual says the U.S. military must take “feasible precautions to verify that the targets [it plans to attack] are military objectives” such as enemy combatants. As these rules dictate, civilians and military medical and religious personnel, and locations like schools, hospitals, places of worship are not to be attacked.

Given the rapid deployment of AI in wartime, whether the U.S. military is truly taking “feasible precautions,” to ensure it is targeting true military objectives, rather than civilians, deserves scrutiny.

More bluntly:

If your mental model for what’s going on here is that a giant robot is shooting lasers at Iran, but every time it picks a new target a human soldier has to manually press a green APPROVE button, and the soldier is just constantly pressing APPROVE APPROVE APPROVE without looking very hard at where the robot is shooting….

…I’m sure this is a crass oversimplification in many ways…

…but honestly you’re probably closer to the truth than someone who gives a lot of credence to official palbum about how they’re being extremely careful.

And sticking with that analogy, the thing that led to “Secretary of War” Hegseth fighting with Anthropic’s executives, and Trump ranting about how Anthropic is a “RADICAL LEFT, WOKE COMPANY” that’s trying “TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS” was that, in the long term, Trump and Hegseth want to phase out the soldier with the green button and just let the giant robot fire at will.

So, Autonomy is no longer an outlandish possibility but an official goal of the federal government. The Trump administration is so angry about Anthropic’s squeamishness about “fully autonomous” murderbots that they tried to officially designate the company as a “national security risk.”

And Misalignment was always at least somewhat plausible.

That leaves Mind.

To decide whether an AI system could ever truly count as “having a mind,” first we have to figure out what it is for anything to truly count as “having a mind.” Anyone who knows a little about the philosophy of mind knows that this isn’t an easy thing to do.

Substance dualism holds that minds are non-physical entities that somehow interact with physical brains and bodies. Property dualism is a kind of halfway house between that view and materialism. It doesn’t stipulate a non-physical entity doing the thinking, feeling, and so on, but it does say that we as physical beings have mental properties that can’t be reduced to anything physical.

Even property dualism, though, runs up against problems about a principle philosophers call “the causal closure of the physical” (the principle, which certainly seems like a awfully important implicit assumption of the sciences, that physical effects have to have exclusively physical causes). If I feel pain and then I speak or type the words “the sensation of pain I’m experiencing right now is a reminder of why I’m a property dualist, this qualitative experience is surely irreducibly non-physical” then it sure seems like there’s a cause and effect connection between the pain itself and what’s going on with my vocal chords or typing fingers. But if there is, and the content of my statement is correct, then so much for the causal closure of the physical.

What about materialism? Even if (like me) you tend to assume that some materialist account of mind must be correct, the fleshed-out theories currently on the table might all be a bit unsatisfying. Functionalism (the view often assumed by AGI boosters) holds that mental states are nothing more than roles in input-output systems. This runs into problems like the Chinese Room Argument discussed below.

Alternately, you can revert to an older form of materialism that holds that mental states just are brain states. This has problems of its own like “Martian pain” examples. If we discover aliens who seem to have complex inner lives, are we really going to rule out a priori the idea that the aliens could experience pain on the grounds that they would surely have very different brains than ours and thus not have whatever brain state that mind-brain identity theory tells us “just is” what pain is?

It would be ridiculous to derive any substantive conclusion about a subject as complicated as philosophy of mind from such a short and superficial four-paragraph tour. (Note that I haven’t even begun to tease apart issues like whether “having a mind” in the sense of thinking in human-like or superhuman ways comes apart from “having a mind” in the sense of having mental experiences. This stuff gets very messy very quickly.) But I hope the tour at least has the effect of at least gesturing suggestively in the direction of one reason why it might be very hard to say whether the Mind Premise is correct. Before we can even evaluate Mind, we need to finish figuring out what makes us “truly count” as “having minds.”

A longstanding view of least many functionalists excited about the possibility of machine intelligence was that we should accept any future AI as a mind if it passed a Turing Test. Without too much simplification, the idea there was that if the AI does a good enough job of tricking humans into thinking it is a human, we should accept that it’s achieved human-level intelligence.

The classic argument against this comes from the late philosopher of mind John Searle. In his 1980 article “Minds Brains, and Programs,” Searle wrote:

Suppose that I’m locked in a room and given a large batch of Chinese writing. Suppose furthermore (as is indeed the case) that I know no Chinese, either written or spoken, and that I’m not even confident that I could recognize Chinese writing as Chinese writing distinct from, say, Japanese writing or meaningless squiggles. To me, Chinese writing is just so many meaningless squiggles.

Now suppose further that after this first batch of Chinese writing I am given a second batch of Chinese script together with a set of rules for correlating the second batch with the first batch. The rules are in English, and I understand these rules as well as any other native speaker of English. They enable me to correlate one set of formal symbols with another set of formal symbols, and all that ‘formal’ means here is that I can identify the symbols entirely by their shapes. Now suppose also that I am given a third batch of Chinese symbols together with some instructions, again in English, that enable me to correlate elements of this third batch with the first two batches, and these rules instruct me how to give back certain Chinese symbols with certain sorts of shapes in response to certain sorts of shapes given me in the third batch. Unknown to me, the people who are giving me all of these symbols call the first batch “a script,” they call the second batch a "story,” and they call the third batch “questions.” Furthermore, they call the symbols I give them back in response to the third batch “answers to the questions.” and the set of rules in English that they gave me, they call "the program.”

Now just to complicate the story a little, imagine that these people also give me stories in English, which I understand, and they then ask me questions in English about these stories, and I give them back answers in English. Suppose also that after a while I get so good at following the instructions for manipulating the Chinese symbols and the programmers get so good at writing the programs that from the external point of view that is, from the point of view of somebody outside the room in which I am locked – my answers to the questions are absolutely indistinguishable from those of native Chinese speakers.

It would, Searle thought, be obviously wrong to describe this situation by saying that he “understood Chinese.” And he thought a closely parallel mistake was being made by anyone who thought an AI blowing through a Turing Test was sufficient to show that it had a genuine mental life.

Searle died last September. At the time, I remember thinking at least he lived long enough to see himself thoroughly vindicated.I don’t mean that everyone now agrees that functionalism is wrong (it’s still a very popular view) or that “Strong AI” is impossible (more people than ever think it’s on the horizon) but just that nearly everyone who’s held onto their sanity now agrees that, even if an AI eats Turing Tests for breakfast, that’s not a good enough reason to think it genuinely counts as a mind. Think back to the last couple weeks of your life. It’s very likely that you can think of at least a few borderline cases where you were in an online customer service chat or you saw some social media post and you genuinely had no idea whether the text or images you were interacting with were produced by a human being or an AI. It’s often obvious, but not always. Some degree of uncertainty has become routine.

When I first started reading and thinking about this stuff, I’d constantly encounter people who claimed to be unmoved by the Chinese Room case, or who would say things like “the man in the room might not understand Chinese, but the system as a whole understands Chinese” and thus resist the move to saying that a Turing Test-passing AI wasn’t sentient. In 2026, though, we’ve invented a special term for people who are tricked into believing their AIs are sentient because they do such a good imitation of human beings. We call it “AI psychosis.”

Increasingly, though, I’m not sure whether any of that matters for the August 29th question.

Let’s assume for the sake of argument that Searle was right not just about the part everyone not in the throes of AI psychosis now seems to agree he got right, but about the bigger and more controversial questions. Let’s assume that AI will never be conscious. Let’s even go ahead and assume that it will never “have a mind” in the sense of having at least human-level cognitive powers but without having conscious experiences of its exercise of those powers (or of anything else). As I awkwardly acknowledged earlier, I haven’t been separating out those two issues even though I probably should. But let’s just assume that no AI will ever have any of that.

Why exactly is that supposed to make existential risks from AI any less worrying? If a giant robot was shooting lasers in your general direction, would you feel better if you were told that it was mindless?

To put the point more carefully:

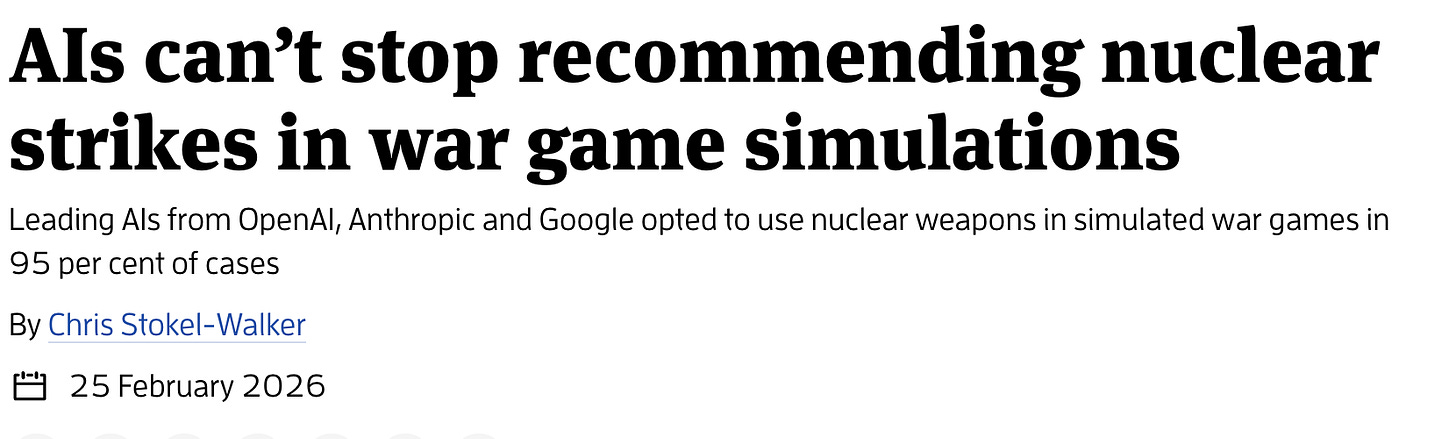

Mind, Misalignment, and Autonomy are all assumptions of standard presentations of the worry. But I’m not sure why Autonomy alone can’t get it done, along with the all too plausible assumption that robustly mindless AI might often act in ways that aren’t aligned with human interests (let’s call that weak claim Misalignment*). We don’t have to speculate about Misalignment*. We’ve seen it play out, in actually existing wars, in the here and now. And given more and more levers of autonomous decision-making being turned over to the machines, the consequences (for Americans and Europeans and Israelis, not “just” for Iranians or Palestinians) could get more and more dire. An article by Chris Stokel-Walker for for The New Scientist, dated three days before the beginning of the war in Iran, had a jaw-dropping headline.

Stokel-Walker writes:

Kenneth Payne at King’s College London set three leading large language models – GPT-5.2, Claude Sonnet 4 and Gemini 3 Flash – against each other in simulated war games. The scenarios involved intense international standoffs, including border disputes, competition for scarce resources and existential threats to regime survival.

The AIs were given an escalation ladder, allowing them to choose actions ranging from diplomatic protests and complete surrender to full strategic nuclear war. The AI models played 21 games, taking 329 turns in total, and produced around 780,000 words describing the reasoning behind their decisions.

In 95 per cent of the simulated games, at least one tactical nuclear weapon was deployed by the AI models.

Do GPT-5.2, Claude Sonnet 4, or Gemini 3 Flash meet any of the standards people typically have in mind when they throw around phrases like “AGI” or “Strong AI”?No.

But I can’t see why that would make us all any less dead if the

Human decisions are removed from strategic defense

part of the August 29th scenario ever came to pass.

I’m not saying that this will happen. I think it probably won’t. But the fact that right now, in 2026, the president of the United States can rail against “RADICAL LEFT, WOKE” tech executives for drawing the line at “fully autonomous” killing machines, and the whole thing barely makes a political ripple, should make us all take the possibility a bit more seriously.

Thanks for reading Philosophy for the People w/Ben Burgis! This post is public so feel free to share it.

Writing in the The Guardian, Kevin T. Baker argues that descriptions like the one I’ve quoted overstate the importance of LLMs like Claude in the Maven targeting system. While he acknowledges the system’s LLM “layer,” he argues that the part of Maven that always “mattered” more in the identification of targets was an older, cruder form of AI more similar to the systems that do things like “recognize your cat in a photo library.”I’ll leave it to the reader to decide if that makes them feel any better.

From Philosophy for the People w/Ben Burgis via this RSS feed